If you're reading this, you're probably at the stage where someone - a cofounder, an investor, a new hire who keeps asking where things are - has finally made the case that your current documentation setup isn't going to cut it. The problem is that "we should probably get an ELN" quickly turns into a surprisingly complicated procurement exercise. The market is crowded, the feature lists all look the same after the third demo, and the pricing is structured in ways that make apples-to-apples comparison genuinely difficult. Add in compliance requirements you may or may not fully understand yet, a team that has opinions about software, and a runway that makes you think twice about every annual contract - and it's easy to either rush the decision or put it off indefinitely.

This guide won't tell you which platform to pick. What it will do is help you figure out what you actually need, what questions to ask, and where the real decision points are - so that when you do sit down with vendors, you're evaluating the right things.

Why Your Biotech Startup Needs an ELN from Day One

The most common version of the delay argument goes something like: we're too small, we'll deal with it when we have more people. It's understandable. In the first six to twelve months of a startup, everything is urgent and an ELN evaluation feels like overhead.

The problem is that the cost of delay is usually invisible at first, but it will always catch up with you eventually and by then it's already been eating away at your time and resources for months. Think about how long it takes to track down information about an experiment that was run just four weeks ago. What samples and reagents were used, and from which lot? Have those reagents shown up in other experiments, and if so, who ran them and when? Where's the raw data file that goes with that notebook entry - is it on someone's laptop, in a shared drive folder no, or in an email inbox? That kind of search, multiplied across a small team running parallel experiments adds up to a real productivity drain. It won't show up as a line item on your budget, but it is absolutely a cost - and a considerable one in a startup where everyone's time is already stretched thin.

Without a shared system, data lives in individual notebooks, personal laptops, spreadsheets, and inboxes. When someone leaves (and at startups, people leave) that institutional memory often walks out with them. When you're preparing for a Series A and due diligence surfaces questions about your data trail, the last thing you want is to be scrambling through paper notebooks and spreadsheets trying to reconstruct six months of experiments. Investors doing due diligence on early-stage biotech have seen enough to know what a documentation mess looks like - and the significant risks that come with it, not to mention what it costs to untangle. Some will walk away from an otherwise strong deal because they've learned, often from experience, that chaotic data management is rarely an isolated problem and introduces significant risk. Bear this in mind if you intend to raise at some point: Due diligence is partly about the science, but it's also about whether investors trust the team to execute. Demonstrating good research data management is a small thing that carries a disproportionate amount of weight in that assessment. Clean, traceable research records don't guarantee a yes - but messy ones have a way of turning a maybe into a no, often quietly, without anyone telling you that's why.

There's also a compliance dimension that tends to catch early-stage teams off guard. If you're working toward any kind of FDA interaction (e.g. IND applications or GMP manufacturing) you’ll need documented, traceable research records - auditors are not particularly sympathetic to "we were a small team and things were a bit informal." Starting that paper trail on day one is significantly less painful than trying to retrofit it two years in.

IP protection is another angle that doesn't get enough attention at the early stage. Timestamped, auditable experiment records are exactly what patent attorneys need to establish invention timelines. If you're building on novel science and anticipate filing, having a clear, unambiguous digital record of who did what and when has real legal value - and it's something you can't reconstruct retroactively from paper notebooks with any credibility.

One underrated benefit of a well-maintained ELN is what happens to a team's collective knowledge when research is organized and accessible to everyone. Onboarding a new scientist becomes faster - no need to schedule a series of knowledge-transfer meetings that pull senior people off their own work. A postdoc troubleshooting a failing assay can check whether a colleague ran into the same issue six months ago and how they resolved it. Experiment designs, selection of appropriate controls, troubleshooting, protocol iterations - all of that becomes something the whole team can learn from, rather than knowledge that lives with one person (until they leave). It's the sort of thing that doesn’t show up in any ROI calculation, but ask anyone who has managed research teams for long enough and they'll tell you it's one of the bigger differences between a team that plateaus and one that keeps improving. When people can see each other's work, they get better at their own. It's that simple; and a well-maintained ELN is what makes it possible.

And there's a purely practical upside that gets undersold: onboarding a new system when you're three people takes a week. Doing the same thing at thirty people, mid-project, is a different kind of disruption entirely. The cost of switching only goes up.

Key Features Every Startup ELN Should Have

The feature lists on vendor websites are long and largely indistinguishable from one another. Where platforms truly differ comes down to two things that don't always come through on a sales call. The first is execution.

Whether the features work the way a scientist actually thinks and works, or the way a software developer imagined a scientist thinks and works. Most ELNs are designed by people who have never set foot in a lab - and it shows. Features that look polished on the surface can be seriously clunky and unintuitive the moment a scientist tries to fit them into a real workflow. The other is interconnectivity: how well the different parts of the platform work together in practice. If finding out which samples were used in an experiment requires five minutes of cross-referencing between different sections of the app, the system is creating work rather than eliminating it. Here's what actually matters for an early-stage team:

Flexibility. This is the single most important thing to look for, especially for a startup. Your workflows are still evolving, and fast. So is your science. Your team keeps growing, your inventory keeps moving around as your lab space gets set up and organized, and you're actively building collaborations with external biotech, pharma, and academic partners.

Most traditional ELN and LIMS platforms are rigid. Vendors often blame regulations, but in my opinion that's an excuse. You can absolutely build a system that is both flexible and supports compliance. It's just harder to do than defaulting to rigidity and hiding behind regulatory requirements. We built IGOR that way, and it's doable.

Scientific workflows evolve constantly, and far faster now than they did 20 years ago when many legacy systems were designed. Back then, a typical workflow meant chemical compounds → in vitro assays → mouse studies → IND filing. Today, labs are working across cell and gene therapy, mRNA, biologics, antibody therapies, peptides, and more. Assay diversity has exploded alongside them. A rigid platform won't keep pace, and your scientists will feel it.

Many ELNs offer some form of customization, but dig into the details during your evaluation. Customization often requires vendor support or coding knowledge, which translates to real cost - and for a startup on a budget, that can add up quickly. Ask what can be customized, whether scientists can handle the customization themselves without IT or vendor involvement, and whether there are any additional fees. Also ask if any customization can still be adapted over time as needs change. The answers will tell you a lot.

An electronic lab notebook that grows and adapts with your team will be an asset now and long after your workflows have matured.

Search that earns its keep. The real value of a digital notebook over a physical one is finding things. Six months from now, when someone needs to locate the optimization run where you first saw that expression artifact, the question isn't just what did we observe - it's when was it run, by whom, what exactly was done, and using which samples and reagents. An ELN that structures your experiments around consistent metadata turns that afternoon of digging into a ten-second filter.

But search is only as good as the organization behind it. An ELN that makes it easy to keep experiments linked to the right projects, samples, and protocols is what keeps that filter useful over time. Without it, data accumulates faster than it gets organized - and slowly, almost without anyone noticing, your digital notebook starts to look a lot like the physical one it replaced. Searches start returning hundreds of loosely matched results. Context gets lost. That ten-second retrieval creeps back toward an afternoon.

The best ELNs don't just store your data or let you run generic searches across everything - they give your team the structure to keep it findable, even as the volume grows and the team turns over.

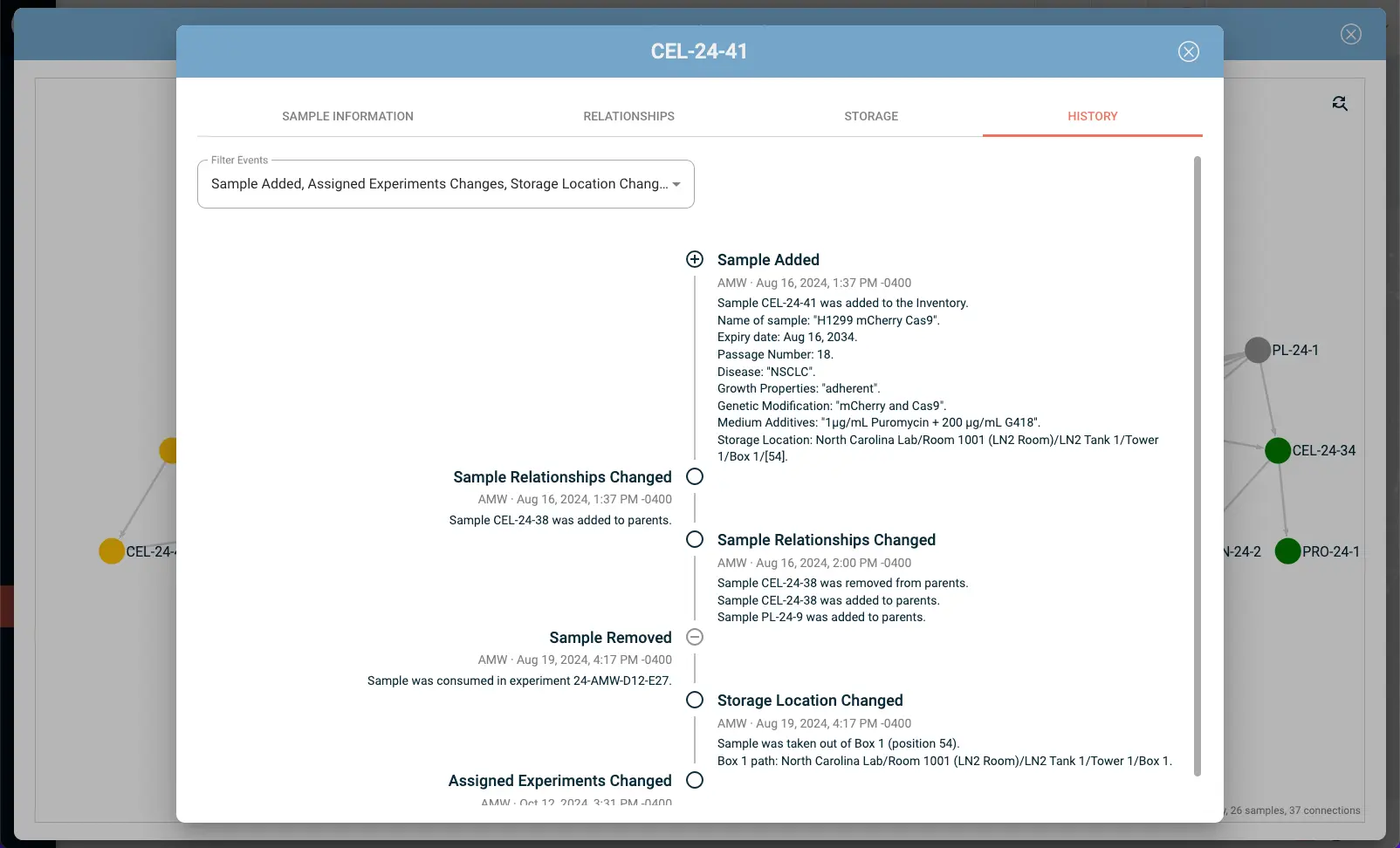

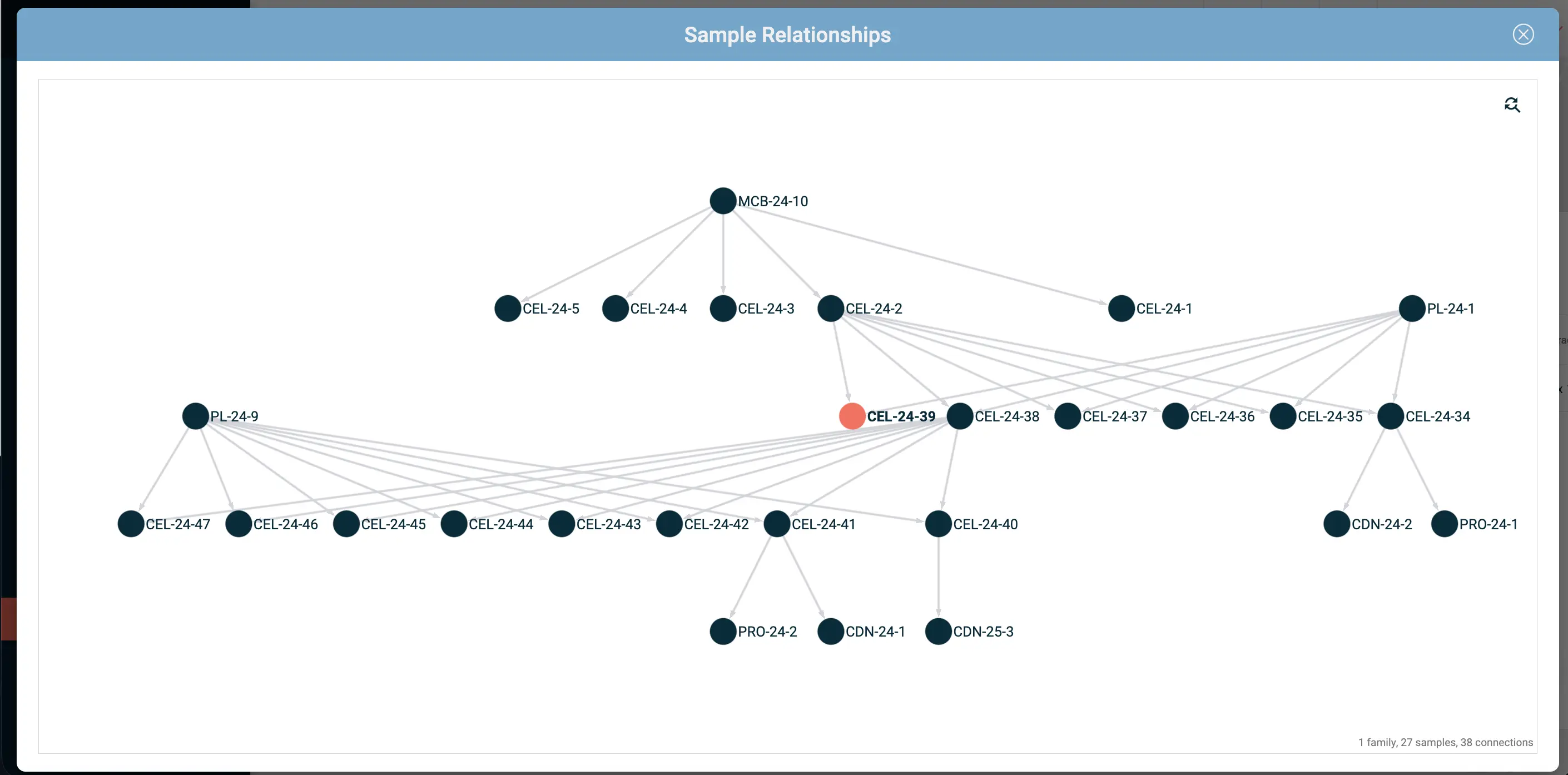

Interconnectivity. A good ELN shouldn't just store your data - it should show you how everything relates. The protocol followed, the samples and reagents used, the observations captured, the data generated, the conclusions drawn. When those elements are linked together instead of siloed, the full picture of an experiment is always just one click away (rather than a cross-referencing exercise across three different tools).

That connectivity has a practical side too. Every additional platform your team uses to plug a gap in your ELN is another subscription, another login, and another place where context gets lost and time gets wasted. When your data lives in one connected system, you're not just saving money - you're also catching relationships and patterns that siloed data would have buried entirely.

When evaluating ELN platforms, ask how deeply the features actually connect. A system where experiments, protocols, samples, and results genuinely talk to each other is a fundamentally different tool than one where those modules happen to share a login screen but are otherwise just patched together.

Collaboration that doesn't require everyone to be in the same room. When everyone is working from the same live data, you eliminate a whole category of friction: no more tracking someone down to find out where an experiment stands, no more waiting for a file to be shared, no more working from a version that's already out of date. That visibility opens the door to something more valuable than convenience - team members can flag issues in experiment design, suggest what to look into next, or offer a fresh read on unexpected results, simply because the data is there and the whole team can contribute.

Startup teams are also often on the go. Conferences, pitch competitions, site visits with collaborators - being on the road is part of the job. An ELN you can access from anywhere means you can pull up data for an investor on the spot, give feedback to the team, or check in on where things stand.

Must-Have Compliance and Security Features

Built-in compliance support. Part 11 is one of those regulations that gets name-dropped constantly but explained rarely. So it’s worth knowing what's actually behind it. 21 CFR Part 11 is the FDA regulation that defines what makes electronic records and electronic signatures legally equivalent to their paper counterparts. In practice it comes down to this: a tamper-proof audit trail that logs who did what and when, a closed system that assigns unique user IDs with role-based access controls, electronic signatures tied to verified accounts, and records that can't be edited without leaving a trace.

A vendor who can't walk you through exactly how their platform handles each of those - not a generic marketing answer, but the actual functionality - is worth approaching with caution.

Even if you're not targeting FDA submission anytime soon, this infrastructure matters earlier than most startups expect. Institutional partners, grant agencies, and investors are increasingly asking about data integrity and security well before an IND is on the table. Getting data security and compliance infrastructure right from the start is much easier than retrofitting it, so choosing a platform that has it built in from the start means one less thing to worry about.

On the security side, data encryption in transit and at rest is non-negotiable - any vendor that can't confirm this clearly should be a short conversation. Beyond that, look for access permissions your team can manage without an IT department, electronic signatures tied to verified accounts rather than just a text field, and reliable backup and disaster recovery with cross-regional coverage. Check also that data ownership sits with the institution, not the individual. For many vendors, this is unfortunately only covered under higher-priced enterprise tiers, but when a team member leaves, their experiments, protocols, and results need to stay accessible to the team, not walk out the door with them. If your team or any of your collaborators are based in the EU, UK, or Switzerland, also ask specifically about GDPR compliance.

IGOR covers all this as standard - not gated behind an enterprise plan. Encryption, audit trail, electronic signatures, role-based access controls, time-stamped records, GDPR compliance, cross-regional backup, and institutional data ownership. All there from day one.

Mobile Access and Bench-Side Documentation

This is consistently underestimated in evaluations and consistently appreciated once people actually have it.

There are two problems it solves, and both are more common than people admit. The first is the gap between observation and record: you notice something at the bench, you're mid-experiment, maybe gloved up, and you make a mental note to write it up properly later. Sometimes you do. Often you forget, or you do note it down but with enough delay that some details have slipped away. The second problem is what happens in the absence of a proper solution: staff pulling out personal phones to photograph gels, plates, or handwritten calculations. The image gets captured, but it's now sitting in someone's camera roll - potentially sensitive, potentially proprietary, definitely not where your company data should live.

An ELN mobile app designed for bench-side use doesn't need to replicate the full desktop experience - that's the wrong goal, and entering data on such a small touchscreen mid-experiment is error-prone anyway. What it should do is let you capture what's in front of you, right now: a photo of a gel, a scan of a handwritten calculation, an observation you'd otherwise trust to memory. It goes straight into your digital lab notebook, timestamped, and attached to the right experiment, without waiting until you're back at your desk.

This is also a genuinely useful bridge for scientists who prefer pen and paper at the bench - and there are still a lot of them, for good reasons. You just add a step at the end: photograph your notes or observations before you leave the lab. The image gets attached to the right experiment, timestamped, and there when you need it - not rattling around on a piece of paper destined for a drawer.

IGOR's mobile app also adds data security considerations - images captured in-app are never saved to the user's personal device. They're cached temporarily until uploaded to the web app, and deleted if not. No risk of exposing sensitive company data.

Evaluating ELN Pricing Models for Budget-Conscious Startups

Pricing structures in the ELN space are less standardized than they probably should be, and for many vendors anything but transparent, which makes direct comparison often tricky.

The two main models are per-user (seat-based) and flat-rate team plans. Per-user pricing is intuitive when you're small - you pay for what you use. It becomes less attractive when you start adding people who only need read access, like collaborators, advisors, or part-time researchers, and you're paying full seat prices for occasional users. Most ELN vendors do not offer discounted guest user or part-time licenses. IGOR is one of the few exceptions.

Flat-rate plans can be better value at scale, but they often come with caps - on users, on storage, on features - that only become apparent when you're approaching them. Read the terms carefully, specifically around what happens when you exceed plan limits. Will you be forced to upgrade into a higher pricing tier? It's also worth asking whether there's a minimum seat or license purchase requirement - many vendors have one, which means you may be paying for more users than you actually have from day one.

Watch out for what's not in the quote. When you ask a vendor for pricing, what you typically get back is a quote covering the license fee. What often doesn't appear on that quote: onboarding, training, customer support, additional storage, customization, and updates - all of which can carry separate fees depending on the vendor and plan. Before you sign anything, ask explicitly what is and isn't included, get a number for each of those line items if they apply, and ask directly whether there are any other costs that might come up over time that you haven't thought to ask about yet.

We speak with teams regularly who tell us their actual ELN or LIMS cost was significantly higher than what they expected when they signed (more than 2x in some cases) - because of add-ons and fees the sales rep didn't volunteer during the evaluation. It's not always deliberate, but it's common enough that going into any pricing conversation with a checklist is worth the five minutes it takes.

A word on startup pricing packages - and why to read the fine print carefully.

Several of the larger, well-known ELN platforms offer genuinely attractive startup tiers. Low monthly costs, full feature access, easy onboarding. And they're not necessarily a bad deal - for a while. The issue is what happens when you outgrow the limits that qualify you for the startup pricing.

The pricing jump that kicks in once you hit a certain team size, data volume, or funding threshold can be dramatic. We're talking close to 10x in some cases, from what we’ve heard. And by the time that happens, you've typically got a few years of experimental data sitting in the system - data that would take significant time and effort to migrate off the platform. It can feel a lot like a bait and switch, even if it's technically disclosed somewhere in the fine print.

The fix is straightforward, but you have to do it before you sign: ask very specific questions about exactly where the limits of the startup plan are - user count, storage, funding status, revenue thresholds, whatever the triggers are. Then ask for a quote for what your costs would look like once you cross those limits. Get it in writing. A vendor who's comfortable with that conversation is a different kind of partner than one who deflects. If they're hesitant, that's your answer.

The broader point is that a platform with transparent, predictable pricing - even if the starting price isn't the lowest number you see - may be worth considerably more than a cheap startup tier that becomes a problem at exactly the moment you can least afford it. Switching ELNs mid-growth is genuinely disruptive. Factoring in total cost of ownership over five to ten years, rather than just year one, often changes which option looks like the better deal. You can look at IGOR's pricing structure as one reference point for what transparent pricing can look like when you're building your comparison.

On ROI: treat vendor-supplied numbers as a starting point, not a conclusion. An ELN that saves each scientist several hours per week, prevents one failed repeat experiment per quarter, or reduces the time spent preparing records for an audit has real, calculable value. But that value varies enormously depending on how your lab currently operates. A trial period with your actual data will tell you more than any sales deck.

Should You Choose a Combined ELN/LIMS or Separate Systems?

This is one of the more consequential decisions you'll make during evaluation, and there's no universal right answer. The short version - and there's a longer breakdown of ELN vs LIMS considerations here for anyone who wants it - is that for most early-stage startups, a combined platform is the more practical choice. The core argument is integration. When your experimental records and your sample tracking live in the same system, the link between them is maintained automatically. When they live in separate systems, someone has to maintain that link manually - which means spreadsheets, which means errors, which means the traceability you wanted is only as good as whoever last updated the mapping.

A concrete example: imagine a team characterizing a panel of antibody variants across expression, purification, and binding assays. If the ELN and sample database are separate, every time a sample appears in an experiment someone has to cross-reference IDs between two systems. In a unified platform, the experiment entry links directly to the sample record - sample information, lot number, and storage location included. If that platform also has LIMS capabilities, like IGOR, you get the complete picture: every experiment a sample has been used in, by whom, for what purpose, how samples have been handled, and how samples relate to each other. That's the kind of traceability that matters when an assay fails and you need to figure out quickly whether the problem was the sample, the protocol, or the instrument.

Lab inventory management integrated with your notebook also has practical benefits beyond traceability - knowing that a reagent is running low before you're in the middle of an experiment, for example, or being able to pull a complete reagent history when you're troubleshooting batch variability.

Separate systems can make sense if you have highly specialized LIMS requirements - clinical sample management with specific regulatory demands, for instance - or if you're inheriting an existing LIMS that's already deeply embedded in your workflows. But for teams building from scratch, the maintenance overhead of two systems rarely pays for itself at the startup stage.

Scalability Considerations: From Founding Team to Series B

An ELN evaluation that only considers your current state is an evaluation that will need to be repeated. The question is whether your platform of choice can grow with you or whether you'll be migrating at the worst possible moment - mid-fundraise, mid-study, mid-scale-up.

Data portability is the most important scalability question, and it's the one most teams forget to ask. If you ever need to move off the platform - because your needs outgrow it, because pricing changes, because the vendor gets acquired - can you export your data in a format that's actually usable? Proprietary formats that only open in the vendor's own tools are a lock-in mechanism. Before you sign anything, ask what the export looks like.

User limits and organizational structure. A system that works for five people with roughly equivalent access needs will require more administrative sophistication at thirty. You'll want project-based access controls, the ability to organize data by team or program, and visibility settings that let you share selected data with external collaborators without exposing everything. Ask whether the platform's organizational model can accommodate how you expect to work in two years, not just how you work today.

Support and onboarding. At the startup stage, implementation and customer support matter more than most teams expect going in. The sales demo will always make it look straightforward. What matters is how long it takes your actual team to find their footing. Ask what onboarding looks like, whether there's a dedicated point of contact or a ticket queue, and how responsive support is once you're past the sales process. Also ask what self-serve learning materials are available: video tutorials, documentation, a knowledge base. Startups grow quickly, and you'll be onboarding new team members yourself long after the vendor's initial training is a distant memory. A platform with genuine customer support - not just an AI chatbot - and solid on-demand resources can save a significant amount of time in the first few months, and well beyond.

User Experience and Team Adoption

This is the factor that derails more ELN implementations than anything else, and it's the one most systematically underweighted in evaluations.

A platform that your team won't use is worse than no platform. Not marginally worse - significantly worse, because it creates the false impression that documentation is happening when it isn't. The failure mode looks like this: the ELN gets set up, people use it for a few weeks, friction accumulates, individuals start keeping notes elsewhere "just for now," and three months later you have a hybrid system that's less organized than what you started with.

The friction that kills adoption is usually not dramatic. It's an interface that requires more clicks than it should. It's a mobile experience that's technically functional but painful enough that people don't bother. It's a template structure that doesn't map onto how your team actually runs experiments, so every entry requires workarounds.

Getting buy-in from the group leaders or senior researchers matters more than almost anything else. If senior people use the system visibly and consistently - reference entries in group meetings, link to protocols from their own records - the team follows. If senior team members treat documentation as overhead and keep their own notes elsewhere, adoption fractures from the top.

Involve your team in your evaluation. It’s good to narrow down the search a little first, so there’s not too many choices diluting the discussion. But different people will use the system differently and prioritize different things, so involving your team will help surface potential friction points. The bench scientist is particularly important - they're the person who'll be entering data daily, often under time pressure, often at a workstation that isn't optimized for software use.

On customization: some teams want to configure everything. Others want something that works straight out of the box. Neither is wrong, but they lead to different platform choices. Highly configurable systems give you more control but require real upfront investment to get right - and in a startup, that time has a cost. Platforms that impose their own structure are faster to implement, but that structure may or may not fit how your team actually works.

For most startups, something in between is the better fit. IGOR sits in that middle ground: scientists can adapt the platform to their workflows through point-and-click configuration - no IT support, no coding skills required - and the structure keeps data organized automatically from day one, so there’s less maintenance and you're not spending the first month just setting things up.

Be honest about your team's appetite for configuration before you get drawn in by a demo of elaborate customization options you won't actually have time to implement.

Vendor Support and Implementation Timelines

For a startup without dedicated IT staff, vendor support is your IT department for this system. Treat the evaluation of support accordingly.

Questions worth asking explicitly: What channels are available and what are the response time commitments? Is support included in your plan or tiered? Do you get a dedicated onboarding contact, or do you go straight into a general queue? What does the documentation look like - is it comprehensive enough that your team can self-serve common questions?

Implementation timelines for cloud-based ELNs are generally shorter than people expect. A well-designed platform can have a small team functional within a week or two. If a vendor quotes an implementation timeline measured in months for a team your size, dig into why. It may be warranted - if you're doing a complex data migration or need custom integrations - but it's worth understanding before you sign.

Be cautious of vendors pushing a long-term commitment before you've had a chance to use the system with real data - especially when the incentive is a discount. A meaningful price reduction in exchange for a two or three year contract can look attractive early in an evaluation, but it's worth thinking carefully about what you're trading for it. If the platform turns out not to fit your workflows, or your needs shift significantly as you scale, that discount starts to look a lot less valuable. A vendor confident in their product doesn't usually need to lock you in to keep you.

Real-World Questions to Ask During ELN Demos

Most demos are produced to show you the best-case version of the software - polished graphics, ideal workflows, sometimes slides standing in for the actual application. Your job is to get past that. The questions below are designed to find the friction that a standard demo won't show you.

"Can you show me how [our specific workflow] would work?"

Describe your most complex or idiosyncratic experimental process and ask them to demo it live - not conceptually, in the actual interface. If they can't do it in the demo, it won't get easier once you're a customer.

"What file formats do you support, and can we import data directly from Excel or CSV?"

A huge proportion of lab instrument output comes as spreadsheets. If your team has to manually copy data into the ELN, you’re not just wasting their time, but also introducing a source of transcription errors. Ask to see an import demonstrated live.

"How does your system handle protocol management and SOPs?"

The range here is wide. Some platforms treat SOPs as living documents with version control, approval workflows, and direct links to experiments. Others let you attach a PDF. If protocol management matters to your team, probe specifically.

"What does our data export look like if we decide to leave?"

Watch how this question lands. A vendor who has a clear, practiced answer and offers to show you the export during the demo is a different kind of vendor than one who gets vague or defensive. This question tells you a lot.

"What costs should we expect beyond the licensing fee?"

Get specific. Ask about onboarding and implementation fees, training costs beyond the initial session, charges for customization or additional storage, fees for customer support above a basic tier, and whether updates or new features ever carry additional costs. Also ask whether any of the features shown in the demo sit behind a higher pricing tier than the one you're being quoted. It's not uncommon to walk out of a demo excited about functionality that turns out to be an add-on.

"What's your typical implementation timeline for a team our size, and what causes it to run long?"

The second half of that question is the important part. The answer to the first question is usually optimistic. The answer to the second is more informative.

"What does your support model look like after onboarding?"

The sales and onboarding experience is rarely representative of what day-to-day support actually looks like once you're a paying customer. Ask specifically: is there a dedicated contact, or does every question go into a ticket queue? What are the typical response times? Is support included in your plan or metered separately? Also ask what self-serve resources are available - detailed documentation, video tutorials, a searchable knowledge base. Your team will grow, and you'll be onboarding new hires long after the vendor's initial training session. Remember that.

"Are there any limits we should know about - on features, storage, users, or data volume - based on the plan we're considering?"

Some platforms cap the number of experiments, inventory items, or users at certain tiers. Others throttle features like institutional data ownership, advanced permissions, or audit trails, behind enterprise plans. Ask for a clear breakdown of what your plan includes and where the ceilings are. Then ask what happens when you hit them: does the system notify you, does it stop working, or does it just start charging you more? Understanding the limits of your plan is important before you make a decision.

"Can we trial this with our actual data?"

Not a demo dataset. Your own experiments, your own file types, your own protocol structure. The gap between a polished demo and real-world use is where most surprises live.

"Can existing customers talk to us?"

A vendor who is genuinely confident in their product and their support will have customers willing to say so. Ask for references from teams at a similar stage to yours - early-stage startups if that's where you are.

Making Your Final Decision: A Practical Checklist

By the time you've done demos and run a trial, you probably have a strong intuition about which platform fits best. The checklist below is less about generating a score and more about sanity-checking that intuition.

Are your must-haves covered?

Are your must-haves covered? Go back to the requirements you defined before the evaluation started. Separate the things you genuinely can't operate without from the things that would be nice to have. If a platform covers all your non-negotiables, the comparison between nice-to-haves should be secondary.

Don't get distracted by features you'll never use. Salespeople know exactly what they're doing when they design a demo - it's their job to make everything look essential. It's a bit like a bride flicking through wedding magazines: before long she's planning a 500-person event with a budget that would make a CFO cry, because each individual addition seemed reasonable at the time. The same thing happens in ELN evaluations. That AI-powered something-or-other, the advanced integration with a platform your team doesn't use, the module that might be relevant if your research ever goes in a completely different direction - spoiler alert: you won't use it. It'll add cost, add complexity, and sit unused. Stay focused on the problems you're actually trying to solve.

Did the trial surface real friction?

If it did, be skeptical of "it'll get better once you're used to it." Some friction is familiarity - any new system has a learning curve. But friction that comes from genuine usability issues tends to persist and compound. Trust what you saw in the trial.

Did you involve the people who will use it daily?

If the evaluation was driven by one person without input from the bench scientists who'll be entering data every morning, there's a gap worth filling before you commit.

Do you have clarity on the contract terms that matter?

Specifically: total costs including any fees not covered by the license, data ownership and export rights, auto-renewal clauses, what support is included versus tiered, and what the exit terms look like. Read the data ownership clause carefully - you want it unambiguous that your data is yours, regardless of what happens to the vendor.

Vendors will occasionally offer a discount in exchange for a case study down the line - worth raising if you're comfortable with it.

Are you making a sustainable choice?

Are you making a sustainable choice? The cheapest option today isn't always the right one. Make sure you understand not just what the platform costs now, but what it will cost in two or three years - when your team is larger, your data volume is higher, and your funding situation has changed. A price that works at five people can look very different at twenty.

FAQ

What is the typical cost of an ELN for a small biotech startup?

Difficult to say with any precision, as few vendors publish their pricing openly, and most are anything but transparent about it. What we hear during demos and conversations with biotech teams going through ELN evaluations is that it varies enormously. From what we've seen across the market, seat-based pricing for cloud platforms can range anywhere from $49 to >$500 per user per month. Options at the lower end typically cover basic ELN functionality only - no inventory or LIMS capabilities - or come with limits tight enough that an upgrade becomes inevitable before long. For a team of three to five researchers, a reasonable all-in annual budget including onboarding is somewhere in the $3,000–$10,000 range, though enterprise-oriented vendors can push that considerably higher. Some vendors also offer startup-specific pricing that isn't publicly listed - worth asking about directly.

If you want a straightforward answer on at least one platform, IGOR's pricing for ELN and lab inventory management is publicly available and fully transparent.

Can we migrate our existing paper lab notebooks to a digital lab notebook?

Yes, with caveats. Digital files, like typed notes, spreadsheets, and protocol documents can typically be imported with reasonable speed and fidelity. Physical paper notebooks are a different challenge. They can be scanned and attached as PDFs, but that doesn't give you searchable, structured data. Most teams find it more practical to pick a cutover date and move forward from there, rather than trying to digitize historical records in bulk. The historical records exist as scanned PDFs; new work goes into the ELN. Some vendors offer migration support - ask specifically what that includes and how much it would cost before you assume it covers your situation.

How long does it take to implement an ELN in a startup lab?

For a cloud-based platform with a reasonable onboarding process and no data to migrate, most teams are genuinely functional within one to two weeks. That includes account setup, basic configuration, and getting the core team comfortable enough to use it for real work. Migrating existing data adds time depending on volume and format. If a vendor quotes an implementation timeline longer than a month for a team of under twenty people, ask specifically why - sometimes the answer is warranted, sometimes it reflects a more complex product than you actually need.

What's the difference between a free ELN and a paid one?

Most ELN vendors who offer a free tier will tell you the main difference comes down to team size, features, or storage - all things you can address later by simply upgrading once you hit those limits. In the meantime, you get an ELN for free. Sounds great, right?

Not really. While those limitations are real, the more consequential difference rarely gets mentioned: data ownership. Free plans are almost universally structured around individual accounts, which means the data is tied to the person, not the company. A departing team member, a dispute, or an account that simply lapses can put your research records at serious risk. For a startup building IP, preparing for due diligence, or working toward any kind of regulatory interaction, that's a risk that dwarfs whatever you'd pay in monthly subscription fees for a proper team platform.

Add the usual free-tier constraints on top - storage limits, no electronic signatures, basic version history rather than a compliance-grade audit trail, and support that amounts to a help center article (or none at all) - and the picture becomes clearer. Free tiers have their place as a way to evaluate whether a platform's interface works for your team. As a foundation for a company with IP to protect, compliance obligations to meet, or investors to answer to, they're the not a foundation to build on.