If you've spent any time evaluating lab software, you've drowned in acronyms by now. ELN, LIMS, SDMS, ALCOA+, Part 11, CSV, CSA. Vendor pitch decks don't help - they throw these around like everyone already knows what they mean.

We put this together as the reference we wish existed when we started. It's organized by topic instead of alphabetically. Why? Because knowing what "ALCOA" means is more useful when you can read it next to "audit trail" and "electronic signature" than when it's wedged between "aliquot" and "API."

Bookmark it. You'll be back.

Electronic Lab Notebook (ELN)

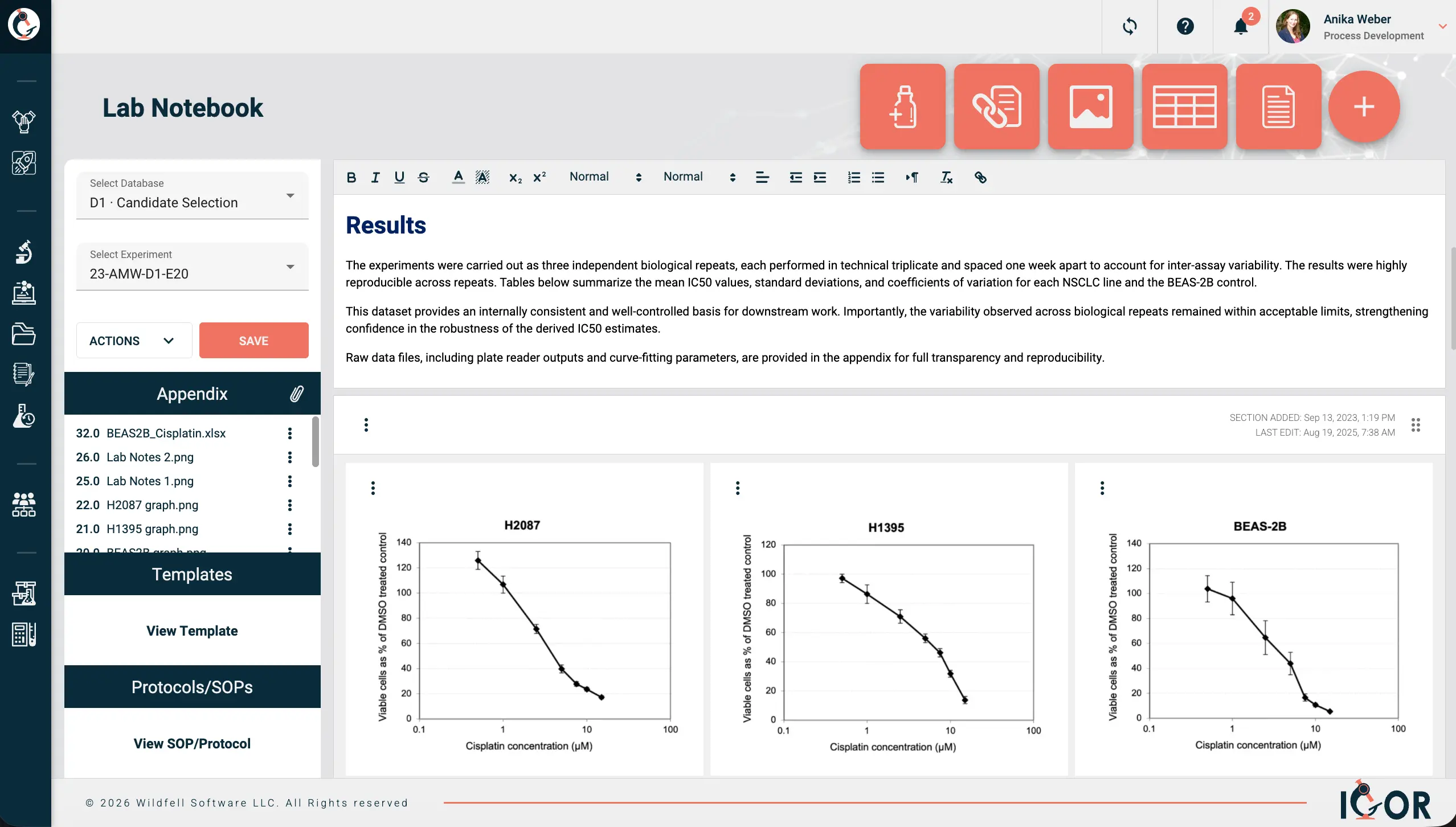

An ELN is your lab notebook on a computer. You document experiments the same way you would on paper - objectives, methods, observations, results - except the records are searchable, accessible to your whole team, and will be easy to find five years from now when someone needs to build on that work. The practical difference from just using Word docs or a shared drive is what runs underneath: Immutable audit trails, full document version history, legally defensible electronic signatures. That's the compliance baseline. But a good ELN does more than tick regulatory boxes. It organizes your data around experiments rather than files, which sounds like a small thing until you're six months into a project and can actually find everything you need without digging through seventeen folders. Standardized templates keep documentation consistent across the team, and access controls mean you can actually open up collaboration with external partners without needing to worry about giving them access to sensitive data that they shouldn't see. IGOR is an example of an ELN that connects all of this in one place. Each notebook entry links to the protocol used, the samples and reagents involved, raw data, associated files, and the project it belongs to. Reagents and samples carry their own history too, so you can see exactly how they connect to other experiments and to each other. Anyone who has tried to replicate that with a shared drive knows how that story ends.

Laboratory Information Management System (LIMS)

Where an ELN follows the scientist and the experiment, a LIMS follows the sample. Where did this sample come from, what tests were run on it, what were the results, and where did it end up? That chain of custody and workflow tracking is what a LIMS does well, and it's why you find them mostly in QC labs, manufacturing environments, and high-throughput testing operations where the same workflows run day after day. Some newer platforms combine LIMS and ELN functionality into a single system. IGOR, for example, integrates sample and inventory management directly alongside experiment documentation.

Scientific Data Management System (SDMS)

Think of it as a structured filing cabinet for instrument output. An SDMS pulls files directly from your instruments (chromatograms, spectra, images), catalogs them, and makes them searchable. Everything stays in its original format, which matters when an auditor wants the raw data rather than just whatever summary ended up in your report. Many ELN or LIMS systems these days have SDMS functionalities built in.

Laboratory Execution System (LES)

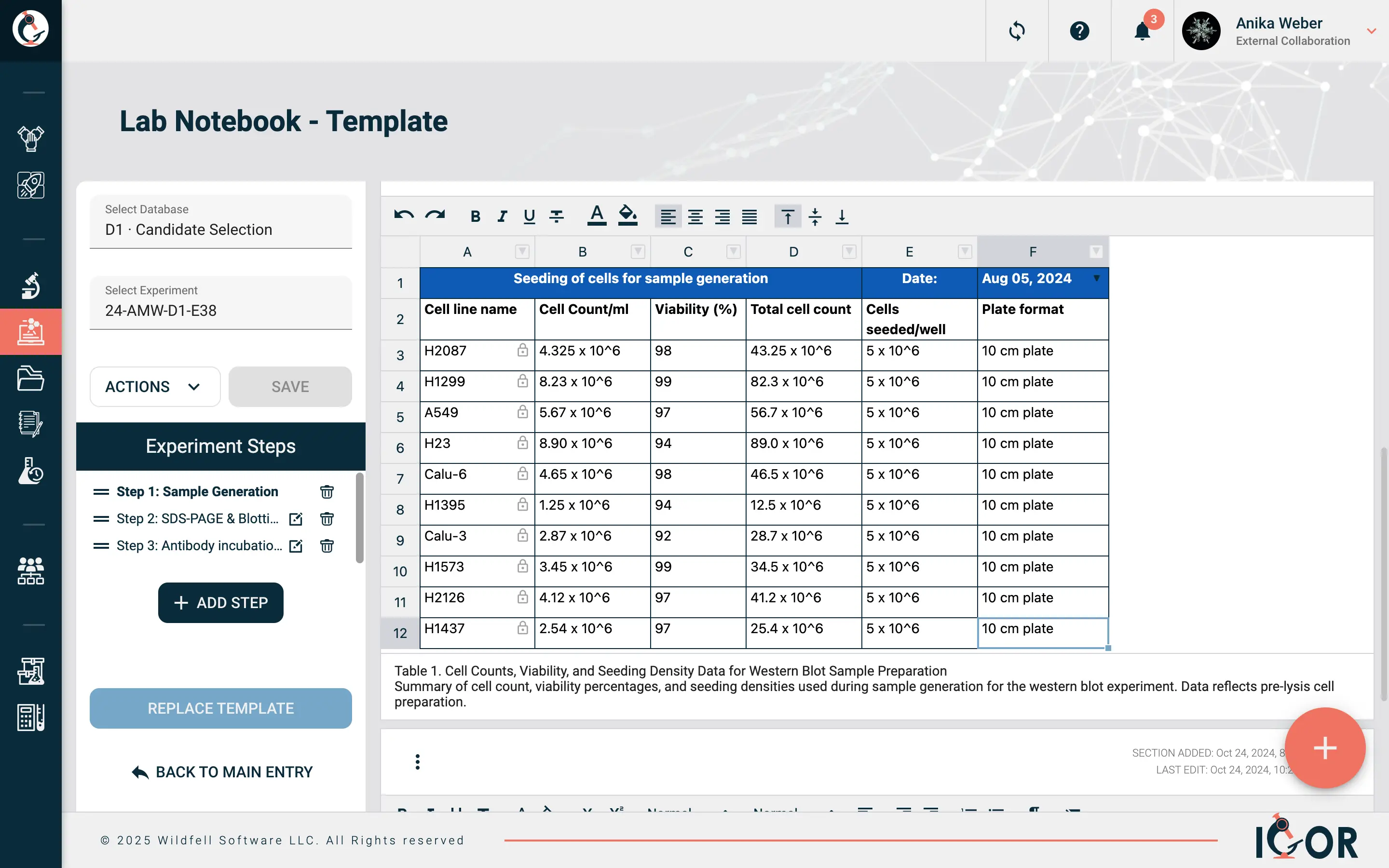

An ELN for people who aren't supposed to improvise. It walks technicians through procedures step by step, enforcing sequence and capturing data at each stage. Manufacturing and QC labs use these when SOPs must be followed to the letter, no exceptions.

Chromatography Data System (CDS)

Specialized software for HPLC, GC, and similar instruments. Handles acquisition, peak integration, calibration curves, results. Most plug directly into the instrument and talk to a LIMS on the back end for sample tracking.

Laboratory Information System (LIS)

The clinical lab cousin of a LIMS. Patient-centric instead of sample-centric - it talks to Electronic Health Record systems, receives test orders, returns diagnostic reports. People use LIMS and LIS interchangeably sometimes, but the clinical context is the real distinction.

Quality Management System (QMS)

The framework (and usually the software) that manages your quality policies: document control, change control, CAPAs, deviations, training records. Everything lives under this umbrella. If you have SOPs, you have a QMS of some kind. Whether it's any good is a separate conversation.

Electronic Quality Management System (eQMS)

The electronic implementation of a QMS. The functions are the same - document control, training records, deviations, CAPAs - but running it in dedicated software means everything is connected and traceable in ways a spreadsheet or shared drive system simply can't match. Anyone who has prepared for an inspection on a paper-based system will appreciate the difference.

Enterprise Resource Planning (ERP)

Big corporate software for procurement, finance, supply chain. Labs bump into ERP systems when ordering supplies or tracking costs. Not lab-specific at all, but it's part of the IT landscape, especially when someone asks why your purchase order is taking three weeks to process.

Graphical User Interface (GUI / UI)

The visual layer you actually interact with - buttons, menus, dashboards, drag-and-drop elements. A badly designed GUI is the reason half of lab software adoption fails. Scientists will tolerate a lot, but if the interface takes six clicks to do something that should take two, they'll go back to paper. When evaluating platforms, pay attention to how the UI feels during a basic, day to day task from a real workflow, not just during the polished vendor demo.

Lab Notebook Concepts

Experiment Entry

One record in your ELN for one experiment or researchactivity. Title, date, objective, materials, method, results, observations. Like a notebook page, except there's no page limit and you can actually search it later.

Notebook Template/ Experiment Templates

Predefined forms that standardize how your team documents a specific experiment type. Every researcher on the team follows the same format, captures the same fields, and produces records that are actually comparable to each other. Without them, every researcher documents things slightly differently, which creates real problems when you need to compare results across experiments or prepare for an audit.

Workspace

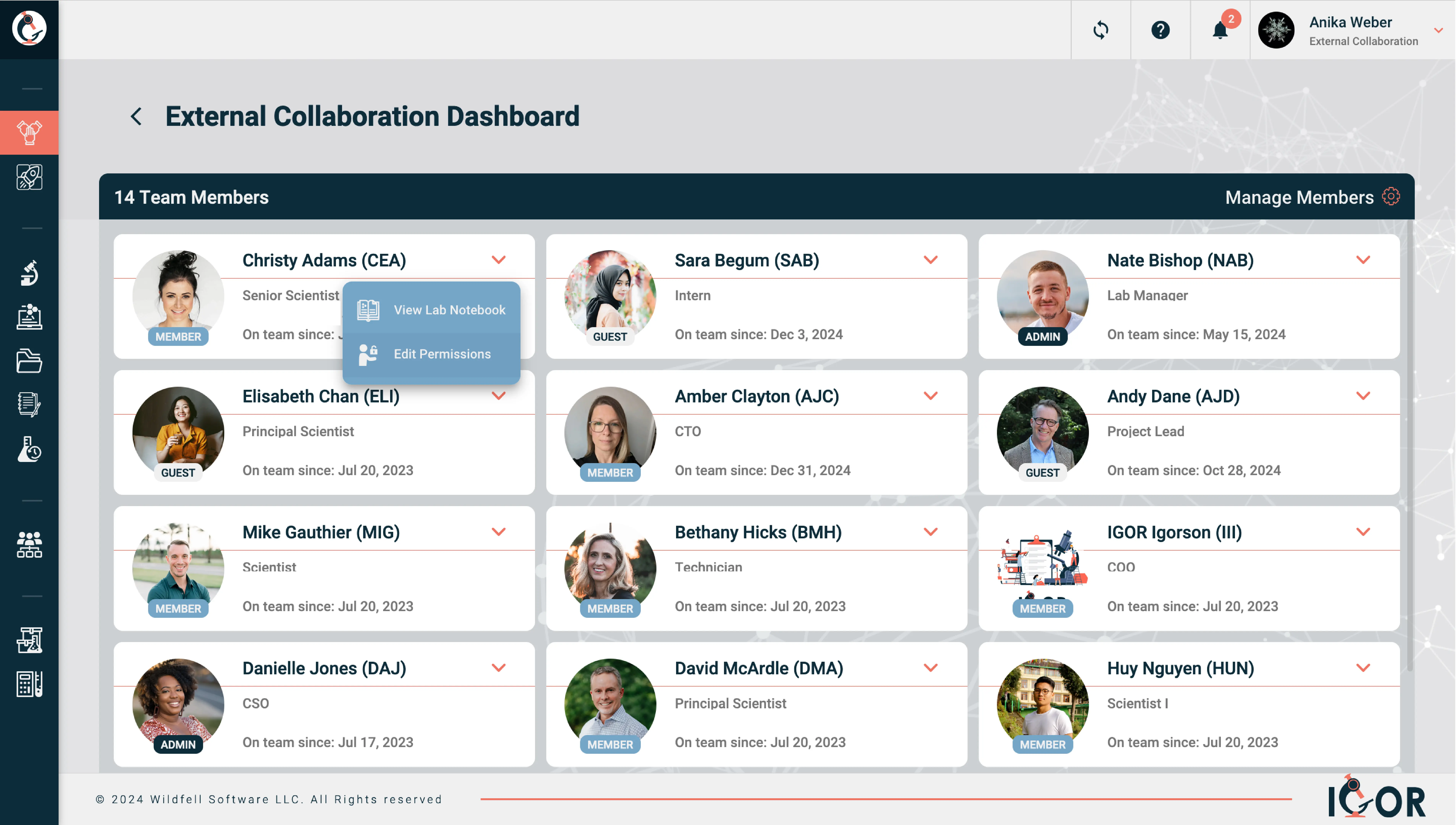

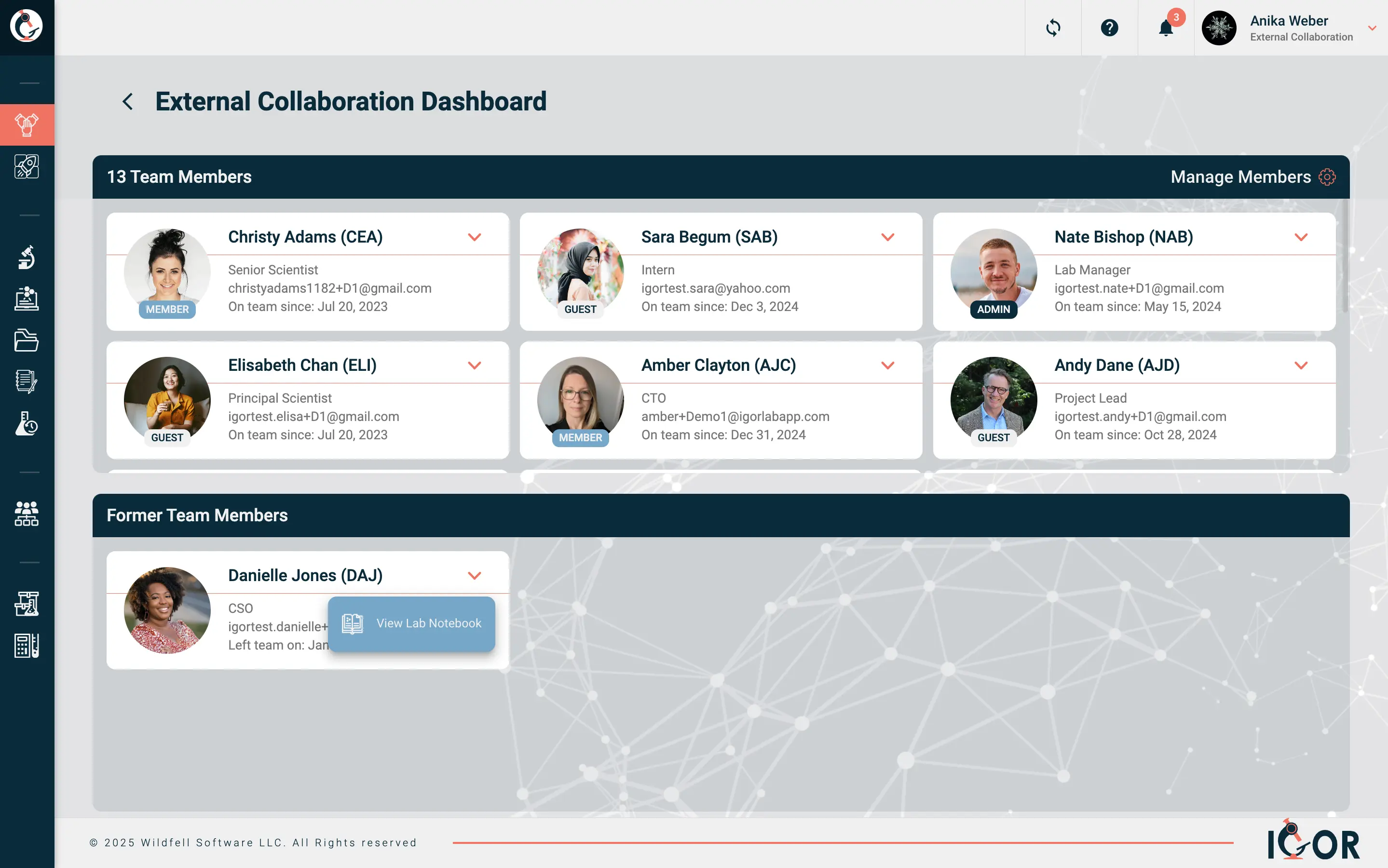

A configurable area in an ELN where a team or project keeps its entries organized. Each one can have its own permissions, templates, folder structure. Useful for keeping Project A's data separate from Project B without maintaining entirely separate systems. Most ELNs or LIMS platforms operate on a single shared workspace for the whole organization. IGOR uses a multi-workspace model instead, where each team, department, or project gets its own self-contained environment with independent templates, permissions, and inventory. Researchers can belong to multiple workspaces with different access levels in each, and external collaborators get access only to what's relevant to their project.

Witness / Countersignature

A second signature from someone who isn't the author, confirming the entry has been reviewed. Important for IP (patent evidence) and for regulatory compliance. In an ELN, the witness signs electronically with their own credentials.

Entry Locking

Making a notebook entry read-only after it's finalized and signed. Amendments are still possible in most systems, e.g. appending notes, or linked corrections, but they sit alongside the original rather than replacing it, with everything captured in the audit trail. The audit trail captures any changes to the original. For regulated labs this is a fundamental data integrity requirement,

Rich Text / Free-Form Entry

Unstructured content in your ELN. Formatted text, inline images, tables, sketches, attached files. As opposed to structured entry where you're filling in predefined fields. Most scientists want both, depending on what they're doing that day.

Cross-Referencing

Linking one notebook entry to related records: other experiments, samples, protocols, instrument data. When it works well, an auditor can follow the thread from your experiment to the protocol, the reagents, and the raw data. Good cross-referencing is what makes it possible to reconstruct the full context of an experiment even months or years later.

Real-Time Collaboration

The ability for multiple researchers to view, comment on, or contribute to the same records simultaneously - think Google Docs, but for lab data. Matters a lot for multi-site teams or collaborations where people aren't physically in the same building. Not every ELN handles this well, making it an important differentiator during any software evaluation.

Sample and Inventory Management

Sample Tracking

Following a sample from the moment it shows up (or gets created) through processing, testing, storage, disposal. This is the core of what LIMS systems do. In platforms that combine LIMS and ELN functionality (like IGOR), you also get visibility into which experiments a sample was used in and how samples connect to each other across different studies. For labs running complex or long-running research programs, that broader view turns out to be genuinely useful.

Chain of Custody

The documented record of every hand a sample passed through - tracking who handled it, when, and where, from collection to final analysis. It's how you prove that a sample's identity and integrity were maintained throughout. In forensics, environmental testing, and clinical labs this is particularly important as a broken chain of custody can invalidate results entirely, regardless of how good the science was.

Aliquot

A measured sub-portion of a sample. E.g. you pull 500 µL from your 10 mL stock for testing. A LIMS tracks parent-child relationships so every aliquot traces back to its source, even after you've split the sample four times.

Reagent Management

Tracking what reagents you have, their lot numbers, storage locations, expiration dates, and which experiments used them. Sounds simple. It stops being simple when you discover someone's been running assays with an expired buffer for two weeks and nobody noticed.

Inventory Management

Reagent management but bigger - covers everything in the lab. Consumables, chemicals, biologicals, equipment, supplies. Quantities, locations, expiration dates, reorder levels, vendor info. IGOR includes integrated inventory management that connects materials directly to experiments, which handles traceability without extra data entry.

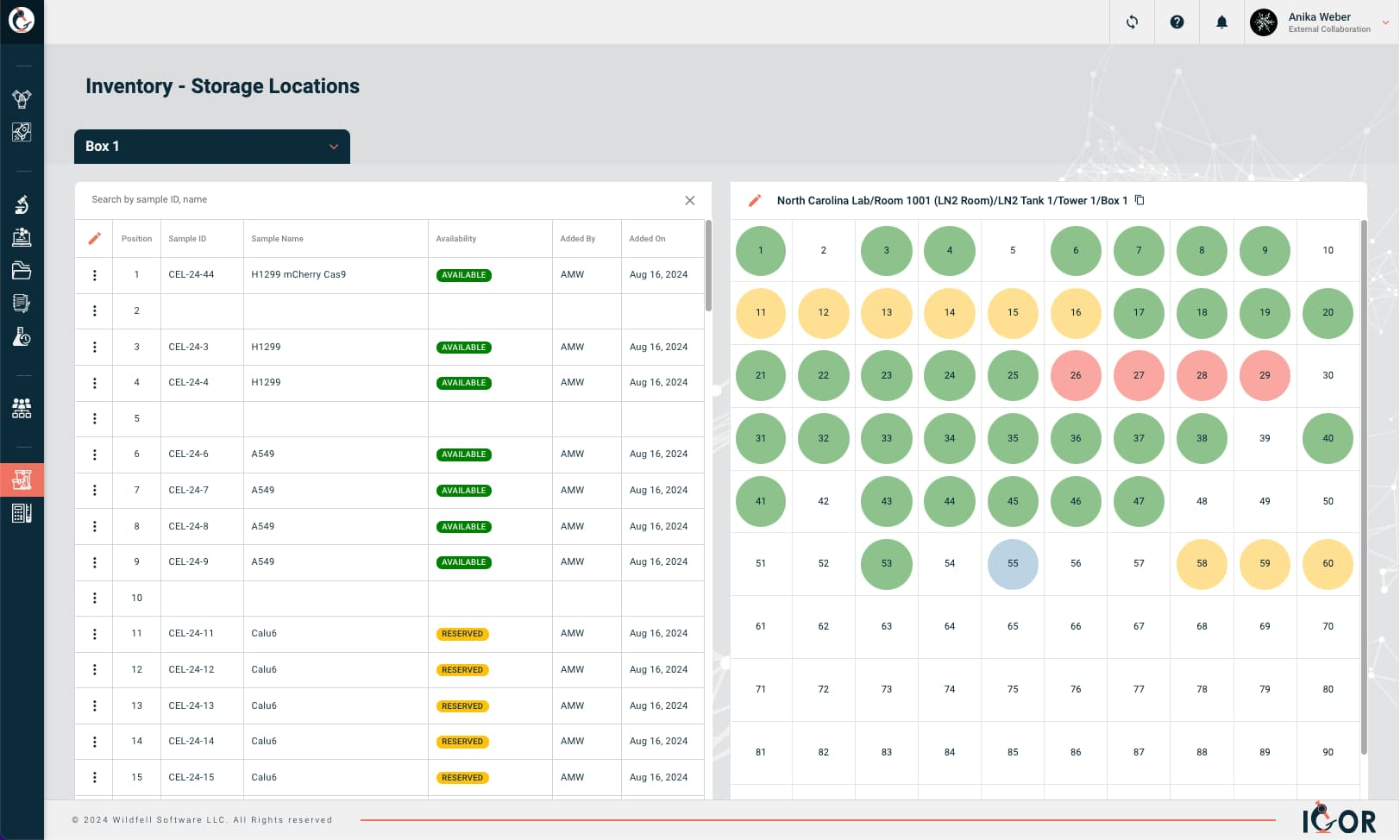

Storage Map

A visual layout of where things physically live: building, room, freezer, shelf, rack, box position. Saves your sanity when you're hunting for a specific sample in a -80°C freezer on a Friday evening.

Barcode / QR Code

Machine-readable labels for identifying samples, reagents, equipment, storage locations. Scan it, pull up the record. Faster than typing, way fewer transcription errors.

Lot Number

A manufacturer's identifier for a specific production batch. If that lot gets recalled, you need to know every experiment that touched it. Without tracking, that's a guessing game you'll lose.

Certificate of Analysis (CoA)

Document from a supplier confirming that a product met specifications. Shows test results, lot number, expiration. Regulated labs keep these on file. Filing them isn't exciting. Not having them when an auditor asks is worse.

Bill of Materials (BOM)

Complete list of materials and components needed for a specific process or product. Common in manufacturing where batch records have to document exactly what went into each run.

Reorder Point

The inventory threshold that should trigger a new order. Get it right and you never run out. Get it wrong and you find out at 9 AM on a Monday that nobody ordered competent cells and your cloning experiment is dead in the water.

Expiration Tracking

Automated alerts for reagents and consumables approaching their expiration dates. Without this, you get the classic audit finding: "expired reagent observed in active use."

Consumables

Single-use lab materials: pipette tips, tubes, plates, filters, gloves. Regulated labs may need to track consumable lot numbers. All labs benefit from knowing how fast they're going through things for budgeting and reorder purposes.

SOP and Protocol Management

Standard Operating Procedure (SOP)

Written instructions for doing a task the same way every time. Required in pretty much every regulated environment. The official answer to "how do we do this?" - as opposed to "well, when I started here, Dave showed me and I've been doing it that way ever since." If you're interested in learning more about how to manage SOPs, check out our blog post Mastering Standard Operating Procedures in the Lab.

Protocol

A detailed plan for a specific experiment or study. More targeted than an SOP. The SOP tells you how to use the HPLC. The protocol tells you how to use the HPLC to answer your particular research question.

Version Control

Tracking every change made to a document over time - who made it, when, and why - with each revision assigned a version number and the full history kept intact. “Which version of this SOP was in effect when that experiment was run?” Is exactly the kind of question auditors ask.

Document Lifecycle

The stages a controlled document moves through: draft, review, approval, release, periodic review, revision, retirement. Document management systems enforce this so that only current, approved versions are available.

Effective Date

When a new or revised SOP officially takes over from the previous version. Work before this date follows the old version; work after follows the new one. Simple concept, but it trips people up during audits when dates don't align.

Periodic Review

Going back to your SOPs on a schedule to confirm they're still accurate. Usually annual, sometimes every two years. "Periodic reviews not performed on schedule" is a surprisingly common audit finding. Nobody loves doing this work, but skipping it gets noticed fast.

Deviation

Any departure from what the SOP or protocol says should have happened. An instrument breaks, a step gets skipped, an incubation time goes on longer than it should have. Deviations happen in every lab. The problem is never that they happened - it's failing to document them when they do.

Change Control

The formal process for making changes to processes, systems, or documents. Propose, evaluate impact, get approval, document. Exists to prevent well-intentioned changes from accidentally creating regulatory problems nobody anticipated.

CAPA (Corrective and Preventive Action)

Corrective action fixes the immediate problem. Preventive action deals with the root cause so it doesn't recur. Every quality system has a CAPA process. Whether it actually works depends on whether people dig into root causes or just document the obvious fix and move on.

Quality Assurance (QA)

The overarching set of processes that make sure lab operations meet predefined quality standards before something goes wrong, as opposed to quality control (QC), which catches problems after the fact. QA integrates with LIMS and QMS to automate checks, enforce procedures, and maintain records. In practice, QA is the reason you have SOPs, training requirements, and periodic audits - it's the system that holds everything else accountable.

Data Integrity and Compliance

ALCOA

Five letters that follow you through your entire career in regulated science: Attributable, Legible, Contemporaneous, Original, Accurate. The baseline data integrity standard, originally from FDA guidance but now referenced by regulators worldwide. The framework predates electronic records by decades, which is part of why it holds up so well - the principles apply whether you're writing in a paper notebook or entering data into a cloud-based ELN. Every data integrity inspection, warning letter, and audit finding traces back to one or more of these five attributes in some way.

ALCOA+ (ALCOA Plus)

Extends the original framework with four additional attributes: Complete, Consistent, Enduring, Available. Nine total. The "plus" additions address gaps that became more apparent as labs moved to electronic systems and data volumes grew. Complete covers the inconvenient results that sometimes go unrecorded. Enduring deals with long-term data preservation across software migrations and format changes. Available means being able to actually produce a record when asked, not just technically having it somewhere. When regulators review your records, these nine attributes are the lens they're using.

Attributable

Who generated this data? In an ELN, that means authenticated logins and audit trail entries showing which user did what. On paper, initials and dates in permanent ink.

Legible

Can you read it? Not just today - years from now, someone who wasn't involved in the work needs to be able to make sense of it. If your handwriting is illegible or your file format is proprietary and the software no longer exists, you've got a problem.

Contemporaneous

Record data when you generate it. Not three weeks later from memory. Not from sticky notes you found in your lab coat. At the time of the activity, or close to it.

Original

The first recording of the data must be preserved. Copies can serve as the record, but only if verified as true copies. The raw file from your instrument is the original. The Excel table you made from those numbers is derived data.

A good illustration of how far this principle extends (pointed out to me by a good friend a few years ago): scribbling a cell count on your glove because there's no paper nearby technically makes that glove raw data. It should, strictly speaking, go in the lab notebook. Nobody is recommending that, of course, so best keep a small notebook at every bench instead.

Accurate

Does the record match what actually happened? Transcription errors, selective rounding, leaving out inconvenient results - all failures of accuracy. Sounds obvious until you see how routinely it goes wrong.

Complete

Record everything. Including the failed runs. Including the results that didn't fit your hypothesis. Including the controls that looked weird. Deleting inconvenient data is a serious integrity violation. Full stop.

Consistent

Related records need to agree with each other. Your notebook says Tuesday; the instrument log says Wednesday. That's a consistency problem.

Enduring

Records must last. Paper needs permanent ink, not pencil. Electronic records need to survive software upgrades, server migrations, and format obsolescence. If you can't open the file in fifteen years, you've failed this one.

Available

Can you actually produce the record when asked? Data on a backup tape in a warehouse that takes four weeks to retrieve doesn’t meaningfully count as "available."

Audit Trail

The automatic, timestamped log of every action on a record. Who created it, who changed it, what changed, when. Can't be turned off by users. If your system lets people disable the audit trail or tamper with it, that's a red flag.

Electronic Signature

Not just clicking "OK." Under Part 11, electronic signatures must be tied to the record they authenticate and include the signer's name, date/time, and what the signature means - authored, reviewed, approved, etc.

Metadata

Data about your data. Who created a record, when, on what instrument, under what conditions, which software version. Regulators hold metadata to the same integrity standards as the data itself.

Data Governance

Organizational policies around data: who accesses it, how quality is maintained, retention schedules, disposal procedures. More management discipline than technology, but it matters a lot when something goes wrong.

True Copy

A verified reproduction that preserves the content, meaning, and context of the original. A PDF export of an ELN entry with audit trail and signatures intact counts. A screenshot with metadata cropped out does not.

Regulations and Standards

21 CFR Part 11

The FDA regulation that establishes the criteria under which electronic records and electronic signatures are considered legally equivalent to paper records and handwritten signatures. If your organization is FDA-regulated and uses electronic systems for GxP work, Part 11 defines the rules: Audit trails, access controls, system validation, electronic signature requirements. It came into effect in August 1997, which means it was written well before cloud software, SaaS platforms, or most modern ELN and LIMS systems existed. The core requirements have barely changed since, and it still shapes how lab software gets selected, validated, and used across pharma, biotech, and medical devices.

EU Annex 11

EU GMP Annex 11 is the EU guidance annex for computerized systems used in GMP. It has similar requirements to Part 11 - validation, data integrity, audit trails, electronic signatures - with differences in specifics. Sell into both markets and you need to satisfy both.

GxP

Catch-all for "Good Practice" regulations. The "x" changes: GLP, GMP, GCP, GDP. What they share is the idea that quality, safety, and data integrity need documented procedures and proper oversight.

GLP (Good Laboratory Practice)

Rules for non-clinical lab studies that support regulatory submissions. Study planning, conduct, monitoring, recording, archiving, reporting. If your lab runs safety studies for pharma or agrochemical companies, GLP is your framework.

GMP (Good Manufacturing Practice)

GMP sets the requirements for how pharmaceutical, biologic, and medical device products must be manufactured to ensure they consistently meet quality and safety standards. Facility design, equipment, personnel, documentation, raw materials, production processes, QC - it covers the full picture. Researchers working in development often encounter GMP requirements earlier than expected, particularly when work starts transitioning toward clinical or commercial manufacturing.

GCP (Good Clinical Practice)

Ethical and scientific standards for clinical trials. Protects trial participants, ensures data credibility. If you've been involved in a clinical study as trial staff, you'veprobably sat through GCP training at some point. ICH E6 is the primary guideline.

ICH

International Council for Harmonisation. Develops guidelines meant to align requirements across the US, EU, Japan, and other markets. Their Q-series (quality), E-series (efficacy), and S-series (safety) get referenced by regulators globally.

ICH E6(R3)

ICH E6 is the guideline that governs how clinical trials are conducted, monitored, and documented. Participant protections, investigator responsibilities, data governance, audit trails - it covers the full picture. The current version, E6(R3), was finalized in January 2025 and formally adopted by the FDA that September. The updates reflect how clinical research has changed: more electronic records, more decentralized trial designs, a harder look at data integrity throughout. If your work touches clinical trials at any point in the chain, your processes need to align with this one.

FAIR Data Principles

Findable, Accessible, Interoperable, Reusable. Framework for making scientific data useful beyond its original experiment. NIH has required data management plans since January 2023, and FAIR is the framework most funders reference when evaluating those plans.

FDA

U.S. Food and Drug Administration. Regulates food, drugs, biologics, medical devices, cosmetics, tobacco. For lab scientists, FDA rules on documentation and data integrity determine how you work if it touches anything heading toward a submission or a manufacturing floor.

EMA

European Medicines Agency. The EU authority for evaluating medicinal products. Has been publishing increasingly specific guidance on data integrity, digital tools, and - more recently - AI use throughout the medicines lifecycle.

MHRA

UK's Medicines and Healthcare products Regulatory Agency. Independent from EMA since Brexit, with its own evolving framework. Published some of the clearest data integrity guidance in the industry. Currently developing its approach to AI regulation in healthcare.

PIC/S

Pharmaceutical Inspection Co-operation Scheme. An international network of regulatory authorities publishing GMP inspection guidance. Doesn't carry legal force on its own, but heavily influences how inspectors from member countries actually interpret GMP.

ISO 17025

International standard for testing and calibration lab competence. Common in environmental, food safety, and forensic labs. Covers management and technical requirements like methods, equipment, and quality assurance.

Computer System Validation (CSV)

Proving with documentation that a computerized system does what it's supposed to do, reliably. Write requirements (URS), test against them (IQ/OQ/PQ), document everything. Required for any GxP system. Frequently complained about. Still necessary.

Computer Software Assurance (CSA)

FDA guidance that introduced a risk-based alternative to traditional validation, initially developed in the context of medical device software. Rather than testing every system function to the same level of rigor, CSA directs effort toward the functions with the highest potential impact on patient safety and data integrity. It hasn't formally replaced CSV across all GxP environments, but it has shifted how many organizations think about where to focus their validation resources.

IQ/OQ/PQ

Installation Qualification, Operational Qualification, Performance Qualification. The three classic validation stages. Was it installed right? Does it work as specified? Does it perform under real conditions? If you've done system validation, you've lived these.

User Requirements Specification (URS)

A document that says what a system needs to do, from the user's perspective. The starting point for selection and validation. Skip this and you end up with software that technically works but doesn't actually do what your lab needs.

Validation Master Plan (VMP)

High-level document describing how your organization approaches validation overall. Scope, responsibilities, procedures, timelines. Individual system validations fit within this plan.

GAMP 5

Good Automated Manufacturing Practice, from ISPE. The go-to framework for validating computerized systems in GxP settings. Uses software categories (1 through 5) to scale validation effort to risk. Second edition came out in 2022 and started folding in CSA concepts.

Predicate Rules

The actual FDA regulations - GLP, GMP, GCP - that require records and signatures in the first place. Part 11 applies when you go electronic to meet those predicate rules. Knowing which predicate rules apply to your lab determines which Part 11 requirements matter to you.

Data Integrity Guidance

Specific guidance documents interpreting how ALCOA+ works in practice. The main ones to know: FDA's "Data Integrity and Compliance With Drug CGMP" (2018), MHRA's "GxP Data Integrity Guidance and Definitions" (2018), and WHO's "Guideline on Data Integrity" (TRS No. 1033, Annex 4, 2021) - which replaced the earlier TRS 996 Annex 5 reference you'll still see cited in older documents. The MHRA document remains the most readable starting point. Warning letters from the FDA are worth reading alongside these - they show where the theory meets actual inspection findings.

Warning Letter

A public letter from the FDA telling a company it has serious violations. Data integrity failures, missing audit trails, inadequate validation show up constantly. Warning letters are searchable on the FDA website. Uncomfortable reading, but educational.

Access and Security

Role-Based Access Control (RBAC)

Restricting what users can do based on their assigned user role. A bench scientist creates and edits entries. A supervisor approves. An admin configures templates. Nobody does everything. This is how you prevent both accidental and intentional data integrity problems.

Single Sign-On (SSO)

One set of credentials for multiple systems. Your company login gets you into the ELN, the LIMS, and whatever else is connected. Simpler for users, easier for IT, more secure than everyone juggling separate passwords across ten systems.

Multi-Factor Authentication (MFA)

More than just a password to log in. Password plus a code from your phone, or a hardware token. Increasingly the expectation for any system with sensitive research or patient data.

Data Sovereignty

Data is governed by the laws where it's physically stored. Pick a cloud provider with servers in another country and that country's regulations may apply to your data, regardless of where your company sits. Real consideration for multinational labs choosing cloud platforms. EU and UK-based researchers, or those sharing data with partners in those regions, also need to factor in GDPR compliance when evaluating where their research data is stored and who can access it.

Institutional Data Ownership

Research data belongs to the institution, not to individual researchers. When a postdoc leaves, their notebook data stays behind. Sounds obvious until someone departs and you realize all their experiments lived in a personal Dropbox folder or some free ELN account (which is generally tied to the individual). An institutional ELN makes this a non-issue.

Integration and Interoperability

API (Application Programming Interface)

APIs are how different software systems talk to each other. Your ELN talks to your LIMS, your LIMS talks to your instruments, data moves between them through APIs without anyone retyping numbers into a spreadsheet.

Instrument Integration

Connecting instruments directly to your informatics systems so data flows automatically. The alternative is transcribing numbers from a screen into your notebook by hand, which can be slow and error-prone.

Middleware

Software that translates between instruments and informatics systems. You see it a lot in clinical labs where twenty different analyzer models from five different manufacturers all need to feed into one LIS.

Data Export

Getting your data out of a system in a usable format. Matters for regulatory submissions, vendor switches, and long-term preservation. If your ELN traps data in a proprietary format with no export, that's vendor lock-in, and it'll cost you eventually.

FAIR Compliance

How well your data management aligns with FAIR principles. Grant agencies are paying increasing attention. NIH has required data management plans since early 2023, and FAIR is the framework most funders reference.

Webhook

An automated notification from one system to another when something happens. Your LIMS pings your project management tool when a sample test finishes, for instance. Lighter than constantly polling an API.

Data Lake / Data Warehouse

Centralized repositories where you aggregate data from multiple sources - ELN entries, LIMS results, instrument files - for cross-study analysis or machine learning. More common at big organizations, but the concept is trickling down as AI tools make aggregated data more useful.

Emerging Terms

AI/ML in Lab Software

AI and machine learning features are now built into most lab software platforms in some form. The useful end of the spectrum includes things like natural language search, automated data extraction from instrument outputs, and pattern recognition across large experimental datasets. The other end is mostly rebadged existing functionality with "AI-enabled" added to the product page. When evaluating platforms, the more important question isn't which AI tools are included - it's how AI-generated or AI-assisted content fits into your data integrity framework. That's an area the industry is still figuring out, and for regulated labs the stakes of getting it wrong are significant.

Generative AI

AI that creates new content - text, code, images - from patterns in training data. In the lab context, tools like ChatGPT or Claude being used to draft documentation, summarize results, help with analysis, etc. Useful, but raises data integrity questions that most labs haven't figured out yet.

Hallucination (AI)

When an AI writes something that reads as confident and specific but is factually wrong. In lab documentation, that could mean an invented reference, a fabricated method step, a result that doesn't match your actual data. Looks fine on the surface. The content though is fiction. Worth noting that as of early 2026, this is still a frequent enough occurrence with current AI tools that assuming accuracy is the wrong default. Every AI-generated statement in a regulated record needs verification against source data.

Model Drift

When an AI's behavior shifts over time because the underlying model got updated or the data it encounters has changed. Matters if you're using AI for analysis - the same input might give slightly different output after a model update, and you might not even know the update happened.

Digital Twin

A virtual replica of a physical process or system. In pharma manufacturing, digital twins simulate production so you can predict outcomes and tweak parameters without running actual batches. More common at big manufacturers right now, but the concept is expanding.

Cloud-Based / SaaS (Software as a Service)

Software delivered over the internet on a subscription basis. You access it through a browser; the vendor handles infrastructure, updates, security patches, and backups. Most modern ELN and LIMS platforms work this way now. The pitch is real: lower upfront costs than buying servers, no in-house IT team needed to maintain the system, automatic updates so you're always on the current version, and the ability to scale storage as your data grows. The trade-off is that your data sits on someone else's infrastructure, which makes data sovereignty and vendor lock-in worth thinking about before you sign.

On-Premise

Software installed on your own servers. Maximum control over data and infrastructure, but you own the maintenance, updates, security, and keeping everything running. Some regulated organizations still require this for their most sensitive data.

Hybrid Cloud

A mix. Sensitive data on your own servers, less sensitive functions in the cloud. Compromise between full control and full convenience without committing entirely to either.

Blockchain (in Lab Context)

Distributed ledger technology applied to lab data. The pitch is that recording data hashes on a blockchain creates immutable proof that records haven't been tampered with. Still very early days for lab use. Interesting for high-stakes data integrity situations, but not widely adopted and probably won't be for a while.

This glossary is maintained by the team at IGOR. We update it as terms emerge and the regulatory landscape shifts. Think we missed something? Let us know.