In Part 1 of this series, we walked through what the FDA, EMA, MHRA, and ISPE have published on the use of AI in regulated life sciences, drug development, and medical products. We also covered the April 2026 Purolea Cosmetics Lab warning letter, one of the clearest public FDA enforcement examples to date involving inappropriate AI use in pharmaceutical manufacturing documentation. The firm relied on AI agents to create drug product specifications, procedures, and production records without adequate human review. The FDA treated that as a quality unit failure under 21 CFR 211.22(c).

Most of the draft guidances, frameworks, and principles that have been published are aimed at the regulatory submission end of the pipeline. Sponsors, RA teams, quality and validation professionals working out how to get AI-assisted work past an agency review. But the underlying principles reach further than that. They are highly relevant for the scientist at the bench who's wondering whether it's OK to use ChatGPT to help draft a notebook entry.

The short answer is that it depends, and on more than just the tool. The longer answer requires working through how existing data integrity requirements apply to AI-generated content, where the actual compliance risks are, and what "human oversight" means in practice when an AI writes faster than you can review.

Important Disclaimer: This post is for informational purposes only and reflects our understanding of publicly available guidance as of the date of publication. It is not legal or regulatory advice. Requirements vary by jurisdiction, product type, and intended use. If you're making compliance decisions, talk to a qualified regulatory professional who knows your specific situation.

When AI Output Becomes a Regulated Record

The relevant regulations predate AI, so the foundational principle is not a new one.

If an electronic record is used to satisfy an FDA recordkeeping requirement, 21 CFR Part 11 applies - audit trails, access controls, electronic signatures. If a computerized system is used in a GxP process, EU Annex 11 applies - validation proportionate to risk, output reliability. When an AI tool generates text, performs analysis, or processes data that is incorporated into a GxP record or used to support a regulated decision, that output should generally be treated as part of the controlled record, or at least as a traceable artifact supporting that record.

If you use an AI assistant to draft a methods section for a notebook entry and that draft gets incorporated into the final record, it's part of the record. If it informs a regulated or GxP-relevant decision about an experiment, it contributed to the decision-making process. Once AI output is incorporated into a regulated record or used to support a regulated decision, the question isn't whether data integrity expectations apply. The question is how to work within them.

The ISPE GAMP AI Guide, published in July 2025, is the most detailed industry framework available for validating AI in GxP environments. A practical takeaway for most labs: when AI output ends up in a regulated record or supports a regulated decision, it's best to keep a trail. That means the prompt, the output, and the record of human review - not just the final polished text.

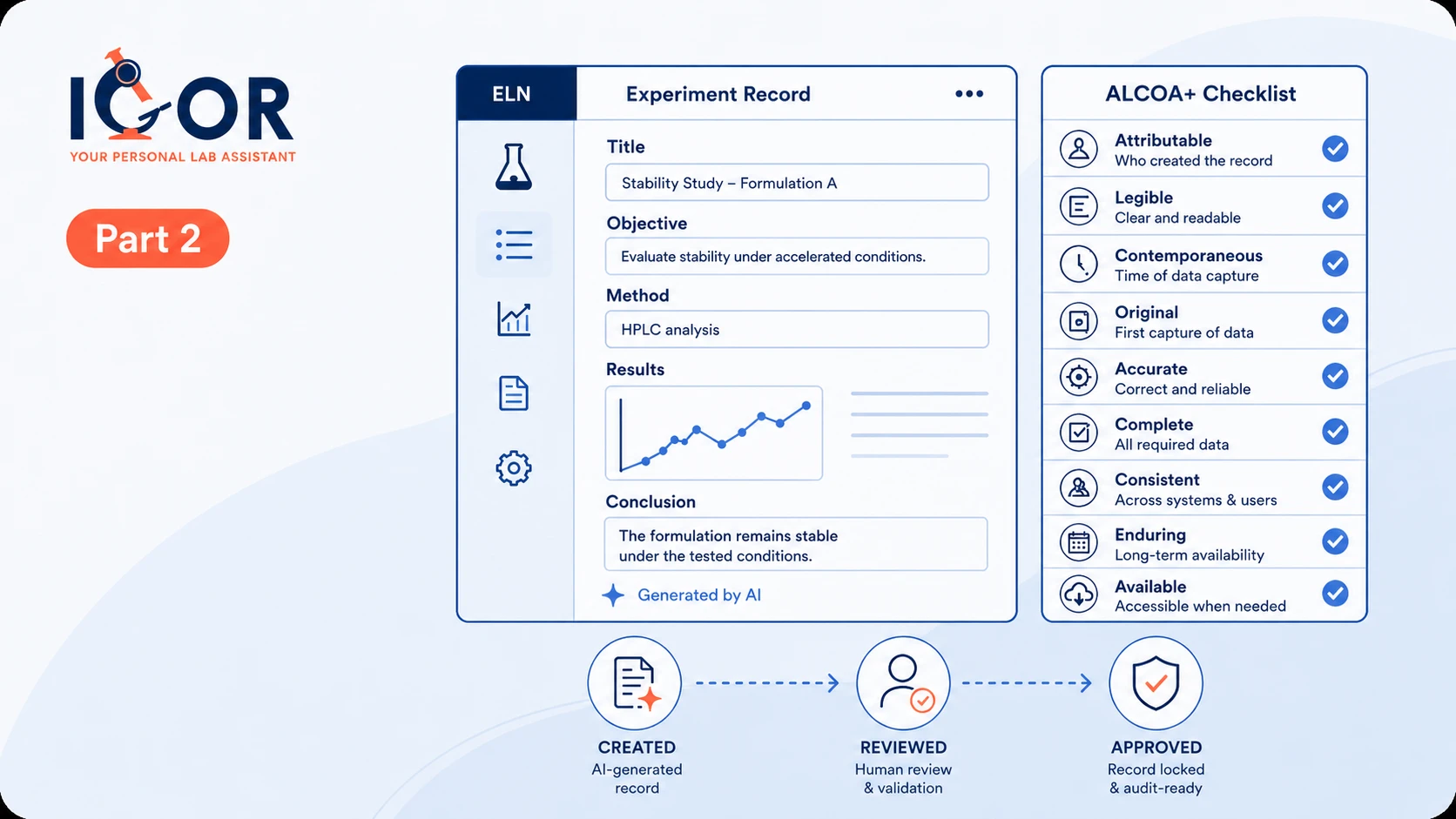

Applying ALCOA+ to AI-Assisted Lab Documentation

ALCOA+ is a common framework regulators and quality teams use to evaluate data integrity. Here's what each attribute actually means when AI is involved.

Attributable

Every record should be attributable to the person, system, or process that created it, and to the accountable person who reviewed or approved it. When a scientist types an entry directly into an electronic lab notebook, attribution is straightforward. When an AI generates the first draft, it gets more complex.

In GxP contexts, the accountable human, role, or quality unit remains responsible for how an AI output is used, reviewed, approved, or relied upon. AI is a tool, not an accountable actor. But for attribution to be meaningful, the record should reflect how it was produced. If a polished methods section was AI-generated but the record contains no indication of AI involvement, there may be a traceability gap between what the record implies and how it was actually created. Depending on the workflow and how the output is used, that gap can become a compliance issue.

Best practice: note in the record that AI assistance was used. Something like "Initial draft assisted by [AI tool]. Reviewed and edited for accuracy and verified by [scientist name]" maintains attribution while being transparent about the process.

Legible

AI-generated text is typically quite legible. Sometimes it's too polished, which can mask a different problem: if the AI uses technical language the scientist doesn't fully understand, the record may be legible in form but not in substance. A scientist should be able to explain and stand behind the content they sign, or point to the appropriate source data, procedure, or reviewer for specialized details.

Contemporaneous

In GxP contexts, records are expected to be made at or near the time of the activity they describe. AI can actually help with this - using it to structure and draft a notebook entry at the bench, during or immediately after an experiment, is better than scribbling notes and writing them up three days later. But it cuts the other way too. AI makes it easier to produce a detailed, polished narrative from sparse notes written weeks after the fact. The timestamps don't lie though - they'll show when the entry was created, not when the work was done.

Best practice: use AI to help document close to real time, not to reconstruct records from notes made long after the work was done.

Original

In the context of AI-assisted documentation, this gets complicated. Is the original the scientist's raw notes? The AI prompt? The AI output? The edited final version?

From a regulatory perspective, the original is generally the first durable capture of the actual data, or a verified true copy where the applicable framework allows it. An instrument readout, a direct observation, a measurement. In most documentation workflows, an AI-generated narrative about that data would then be a derived record, not the original.

Best practice: capture raw data directly into your ELN or data system. Use AI to help write the narrative around that data, not as a substitute for the original data capture.

Accurate

This is one of the most significant compliance risks with generative AI. Large language models can hallucinate - and this is not a rare occurrence. They can produce confident, well-structured, grammatically correct text that is factually wrong. Invented reagent lot numbers. Misquoted concentrations. Method details that don't match what was actually done. References to published literature that doesn't actually exist.

In a non-regulated context, that's annoying. In a GxP context, if unverified AI-generated content is incorporated into a controlled record or used to support a regulated decision, it can become a significant data integrity problem. For generative AI, hallucination is a specific risk that should be addressed in the workflow's risk assessment and mitigation plan.

The risk is compounded because AI hallucinations can be hard to catch. The output reads as authoritative and specific. A scientist reviewing quickly might miss a fabricated detail that could be flagged later.

Best practice: verify every factual AI-generated statement in a regulated record against source data, an approved procedure, or another controlled reference. Treat AI output the way you'd treat a first draft from a new lab member: assume it needs checking.

Complete

Records must be complete for their regulated purpose, including relevant data and observations, not just results that support the expected outcome. AI tools, especially those trained to be helpful, can unintentionally make records look cleaner and more complete than the underlying work actually was. Ask an AI to write up an experiment and it may lean toward clean, expected findings and quietly smooth over anomalous observations, gaps in your documentation, failed controls, or unexpected results you mentioned in passing, or forgot to mention altogether.

Best practice: after reviewing AI-generated content, explicitly check whether all relevant observations, including negative results and unexpected outcomes, are captured in the final record.

Consistent

AI can help with consistency by applying standardized templates and terminology. But different prompts can produce different phrasings for the same procedure, and the AI version may not match the terminology established in your SOPs and quality system.

Best practice: prompt AI with your actual SOP terminology and check outputs against your existing documentation standards.

Enduring and Available

Records must remain accessible and preserved for as long as required, with any changes controlled and traceable. AI-generated content incorporated into a controlled record is subject to the same retention requirements as anything else. The need for appropriate version history and audit trails doesn't disappear because AI helped create the initial draft.

Three AI Data Integrity Risks Labs Should Be Watching Now

Risk 1: Hallucinated content in controlled records

Scientists are busy. Submission deadlines, performance reviews, conference presentations, and several parallel experiments that all need to be documented in detail, reviewed, and witnessed. Some scientists who use AI tools for personal tasks bring those habits to work - ask the same tool to summarize an experiment, copy the output into a notebook entry without carefully checking the details - because the current incubation time is up and they need to head back into the lab. The entry looks professional, but the details might be wrong.

A blanket ban may simply drive use underground. The better approach is training. Staff need to understand not just how to prompt AI effectively, but how to validate what comes back. Every factual AI-generated statement in a regulated record should be verifiable against source data, an approved procedure, or another controlled reference. If a scientist can't point to the record or controlled reference that supports a factual claim the AI made, that claim shouldn't be in the final entry.

Risk 2: Unchecked outputs undermining data integrity

The Purolea warning letter is the extreme version: a quality unit that relied on AI-generated compliance documentation without verifying whether it was accurate, complete, or fit for purpose. Subtler versions of the same problem can happen in better-run organizations.

Always remember: AI should complement human decision-making, not replace it. The risk isn't just AI inventing a wrong number in a data field. It's AI generating an entire procedure, specification, or quality document that looks complete and professional - and nobody checking whether it actually covers what it needs to cover. That's what happened at Purolea. The AI produced documentation. The company assumed that was the same as compliant documentation. It wasn't. Verifying that an AI-generated document is actually adequate for its intended purpose is the human's job.

In regulated workflows, AI summaries and narratives need to tie back to controlled, traceable source data. If an AI generates a summary of your stability results, an auditor should be able to trace every number in that summary back to the instrument system that generated it or the LIMS or ELN where it was captured. If the link between the AI output and the source is unclear, the summary may be unverifiable.

AI tools used in regulated contexts should be governed. In practice, that means using approved, enterprise-grade tools with appropriate data handling agreements - not personal or public AI tools where data handling, retention, access, or model-training terms may be inappropriate for regulated or confidential information. Access controls, SOPs, and a documented risk assessment should sit behind any AI tool used in a regulated workflow.

Risk 3: Inadequate audit trails for AI activity

In many labs, AI assistance may leave no trace in the official record. A scientist uses an AI to draft a notebook entry, copies the text, edits it slightly, and enters it into the ELN. The audit trail shows the scientist creating the entry. Nothing about the AI.

If regulators or auditors ask - and the Purolea letter makes that question more foreseeable - they may want specific information. What was the AI asked to do? Which model and version? Who reviewed the output and accepted it? Which sections of the record are AI-generated versus human-authored?

If you can't answer those questions, there may be a traceability gap that weakens the credibility of the record. It's also worth noting that assuming undisclosed AI use will go unnoticed is not a safe position. Writing style, sentence structure, and phrasing patterns can raise questions during review, and AI detection tools - while not infallible - are increasingly part of quality and compliance workflows. More fundamentally, if AI use is discovered during an inspection and it wasn't documented, the question immediately shifts from "was AI used appropriately?" to "what else wasn't disclosed?" And that's a much harder conversation.

For AI used in a GxP workflow, a reasonable standard is to treat it like any other computerized system whose outputs may affect regulated records or decisions: define the intended use, assess the risk, validate or qualify the workflow as appropriate, and maintain audit trails and review records.

What Your ELN Needs to Support AI Compliance

If your lab is using AI for documentation or analysis, your electronic lab notebook often becomes one of the primary systems of record for demonstrating compliance on the documentation side. Here's what it should support.

Comprehensive audit trails

Changes to controlled records should be captured with user attribution, timestamps, and enough detail to reconstruct the record history. IGOR's audit trail is designed to capture record changes with user attribution and timestamps. For AI-assisted workflows, that audit trail can provide the foundation for documenting when record changes occurred, while a defined review or signature step can demonstrate that human review took place.

Electronic signatures with meaning

When a scientist signs a record, the meaning of that signature should be clear: authorship, review, approval, witnessing, or verification, depending on the workflow. For AI-assisted records, a review or approval signature should reflect genuine review and verification, not just acceptance of a draft. When a reviewer signs a notebook entry in IGOR, they certify that they have reviewed the information and that, to the best of their knowledge, it is true and accurate. In the context of AI-assisted records, that attestation is doing real work.

Metadata fields for AI documentation

Your ELN should support a way to note when AI assistance was used in creating a record. A metadata tag, a checkbox, a notes field. IGOR's customizable experiment databases can support team-defined metadata fields for experiments, which means you can build AI documentation directly into your standard entry structure. If your system doesn't support this natively, an SOP requiring scientists to note AI use in a designated section of each entry provides a workable fallback.

Linked records

When AI-assisted documentation references primary data, those links should be explicit and navigable. An auditor should be able to follow from the narrative to the underlying data, which provides the verification path needed to assess accuracy. IGOR's inventory and sample management integration can allow reagent lot numbers, sample IDs, important metadata, sample relationships, usage history, and storage locations referenced in a notebook entry to be traced back to live records in the system.

Procedure traceability matters too. If an AI-assisted entry references a method or procedure, it should be clear exactly which approved version was in effect at the time. In IGOR, SOPs are linked directly to notebook entries at the exact approved version - including who approved it and when - so that link is part of the record, not something that has to be reconstructed later.

AI Data Integrity in GxP: The Standard That's Emerging

Across the frameworks, draft guidelines, and principles published since 2024, a consistent picture is forming for AI-assisted lab documentation.

For regulated records, the accountable human and the regulated organization remain responsible, regardless of how the record was produced. Human review needs to be genuine, not just a rubber stamp. When AI output contributes to a controlled record or regulated decision, AI involvement should be documented and traceable. The underlying data integrity requirements apply just the same. ALCOA+ doesn't have an AI exemption.

None of that is new. It's existing principles applied to a new tool. And that's actually the useful framing here - labs that already have strong data integrity practices, good ELN discipline, and a culture of thorough documentation are well-positioned to use AI assistance responsibly.Labs that are already struggling with data integrity may find that AI amplifies existing problems rather than fixing them. AI gives those underlying problems a professional finish while leaving them exactly where they were.

Purolea is the extreme end of that spectrum. But the same basic failure mode can appear in milder forms in otherwise well-run labs. A scientist under pressure, a notebook entry drafted with an AI tool, a quick review that missed a fabricated detail. The scale and the baseline culture differ. But the gap between the two is smaller than most labs would like to think.

The good news is that none of this requires waiting for finalized guidance before acting. Document AI use. Verify outputs against source data. Build review and verification steps that mean something. Those are things any lab can start doing today, and they hold up regardless of what the final regulations say.

Part 3 of this series moves from principles to practice: how to write your lab's AI use policy, what training your team needs, and how to think about audit questions as they become more likely.

References

- FDA, "Considerations for the Use of Artificial Intelligence to Support Regulatory Decision-Making for Drug and Biological Products," Draft Guidance, January 2025. Docket No. FDA-2024-D-4689. fda.gov

- EMA, "Reflection Paper on the Use of Artificial Intelligence (AI) in the Medicinal Product Lifecycle," EMA/CHMP/CVMP/83833/2023, adopted September 2024. ema.europa.eu

- FDA and EMA, "Guiding Principles of Good AI Practice in Drug Development," January 2026. fda.gov

- MHRA, "Impact of AI on the Regulation of Medical Products," April 2024. gov.uk

- ISPE, "GAMP Guide: Artificial Intelligence," July 2025. ispe.org

- FDA Warning Letter to Purolea Cosmetics Lab, MARCS-CMS 722591, April 2, 2026. fda.gov

Important Disclaimer: This post is for informational purposes only and reflects our understanding of publicly available guidance as of the date of publication. It is not legal or regulatory advice. Requirements vary by jurisdiction, product type, and intended use. If you're making compliance decisions, talk to a qualified regulatory professional who knows your specific situation.